从物理服务器到虚拟机,再到红得发紫的容器,计算资源的形态之争,才是数据中心和云计算真正的核心主战场。容器更轻、生命周期更短、数量更多,为了简化大规模容器集群的运维,涌现多种容器管理与编排平台(Swarm、 Compose、 Mesos、Kubernetes etc.),这些平台提供诸如资源调度、服务发现、自动扩缩容等策略性功能,真正实现大规模的容器集群,网络是最基础环节

相关术语

IPAM IP地址管理,两种主流方法--基于CIDR的IP地址段分配或精确地为每一个容器分配IP,为每一个调度单元分配一个全局唯一IP地址

Overlay 在现有二层/三层网络之上通过额外的网络协议,将原IP报文封装起来形成逻辑上的新网络

IPSesc 一个点对点的加密通信协议

IP隧道(IP Tunnel) 是将一个IP报文封装在另一个IP报文的技术,可以使得目标为一个IP地址的数据报文能被封装和转发到另一个IP地址。主要拥有移动主机和虚拟私有网络(VPN, Virtual Private Network),在其中隧道都是静态建立的,隧道一段有一个IP地址,另一端也有唯一的IP地址

移动IPv4主要有三种隧道技术: IP in IP、最小封装及通用路由封装

IP隧道技术(IP封装技术): 是路由器把一种网络层协议封装到另一协议中以跨过网络传送到另一个网络的处理过程

IP-in-IP 一种IP隧道协议(IP Tunnel),将一个IP数据包封装进另一个IP数据包中

VXLAN 由VMware、Cisco、RedHat等联合提出的一个解决方案,主要解决VLAN支持虚拟网络数量(4096)过少的问题,在公有云上每一个租户都有不同的VPC,4096个明显不够用,vxLAN可以支持1600万个虚拟网络,基本上够公有云使用

BGP(Border Gateway Protocol)边界路由协议 主干网上一个核心去中心化自治网络路由协议,互联网由很多小的自治网络构成,其间三层路由是由BGP实现

SDN 软件定义网络

路由工作负载IP地址 当网络知道工作负载IP地址时,它可以直接在工作负载之间路由流量

Calico两种封装类型 IP-in-IP, VXLAN

跨子网 仅当流量穿过无法自行路由工作负载IP地址的路由器时,通常需要封装工作负载流量。对于IP-in-IP Calico可以执行封装:所有流量、无流量或仅跨越子网边界的流量

IP pool IP池资源表示Calico期望分配Endpoint IP的IP地址集合

IPIP 当目标IP地址位于启动了IPIP的池中时,将使用IP-in-IP的数据包路由

Block Sizes IPv4默认块大小为26,IPv6默认块大小为122,提供64个地址的块。这允许将组地址分组分配给在同一主机上运行的工作负载。通过对地址进行分组,需要在主机和其他BGP对等体之间交换更少的路由。如果主机分配一个块中的所有地址,那么它将被分配一个额外的块;如果没有可用的块,则主机可以从分配给其他主机的块中获取地址,为借用的地址添加特定路由,会对路由表大小产生影响

CIDR(Classless Inter-Domain Routing) 无类别域间路由是基于可变长子网掩码(VLSM)来进行任意长度的前缀分配,一个用于给用户分配IP地址以及在互联网上有效地路由IP数据包的对IP地址进行归类的方法

Kubernetes网络模型概述

IP-per-pod模型 每一个Pod分配一个IP地址,从端口分配、服务发现、负载均衡、应用程序配置和迁移角度来看,可以像VM或物理主机一样对待Pod

Kubernetes IP地址存在于在Pod作用域,同一Pod中的容器共享网络命名空间(network namespace),包括其IP地址。这就意味着Pod中的容器可以在localhost访问到彼此的端口,也意味着Pod中的容器必须协调端口使用,以避免端口冲突

Kubernetes网络模型实施需要满足以下基本要求:

1.相同节点的Pod可以与节点上所有Pods通信,不需要NAT

2.节点上的代理(system daemon、kubelet、kube-proxy、etc.)可以与节点上所有Pods通信

3.(对于支持在主机网络中运行Pods的平台, e.g. Linux)Pod在节点的主机网络中可以访问所有Nodes的所有Pods,不需要NAT

网络是Kubernetes的核心部分,要准确理解它如何工作具有一定挑战性。有4个网络问题需要解决

1.高度耦合的容器与容器通信 Pod中所有容器的行为就像在网络上它们位于同一个主机上一样,可以通过localhost访问彼此的Port。Pod中的容器共享一些资源: CPU、RAM、Volume等,这种方式保证了简单性、安全性(绑定到localhost的端口仅在Pod内可见)和性能,也减少了容器之间的隔离性--端口可能发生冲突,没有容器专用端口,不过这些似乎都是小问题。因此,用户可以从资源共享和容器隔离方面考虑控制哪些容器属于同一个Pod

2.Pod与Pod通信 每个Pod都有一个IP地址,因此Pod可以在没有代理的情况下进行通信,通过使Pod内部和外部IP和端口一致,创建一个无NAT的扁平地址空间

3.Pod与Service通信: 在前面介绍Service资源对象有涉及这部分内容 服务抽象提供了一种在公共访问策略(负载均衡)下对Pod进行分组的方法。它通过创建一个虚拟IP供客户端访问,并透明地代理服务中的Pod,每个节点都运行一个kube-proxy进程,该进程对iptables规则进行编程,以捕获对服务IP的访问并将它们重定向到正确的后端,它提供具有低性能开销的高可用负载均衡解决方案以平衡来自同一节点上客户端流量

4.External与Service通信 从集群外部访问容器,我们希望为目标Kubernetes服务提供高可用、高性能负载均衡,大多数公共云提供商还不够灵活

Kubernetes网络模型实现之Calico

开源网络和网络安全解决方案,适用于容器、虚拟机和基于主机的本机工作负载。支持广泛的平台,包括Kubernetes、OpenShift、Docker EE、OpenStack和裸机服务

为什么Calico是业界最喜欢的云网络解决方案?

1.简单 传统SDN复杂且难以部署和排除故障,Calico是为云原生应用程序的需求而设计的简化网络模型,消除了这种复杂性

2.可扩展的分布式控制平面 结合IP路由协议与Kubernetes系统中相同的共识数据存储,具有无与伦比的可扩展性和高水平的容错能力

4.策略驱动的网络安全 基于策略语言之上建立了强大的微分段功能及针对任意工作负载的微型防火墙,能最小化攻击面

5.无需overlay 在大多数环境中Calico只是将数据包从工作负载路由到底层IP网络,无需任何额外的标头。在需要覆盖的地方使用轻量级封装(e.g. IP-in-IP,VXLAN)

6.Calico支持IPv4和IPv6网络

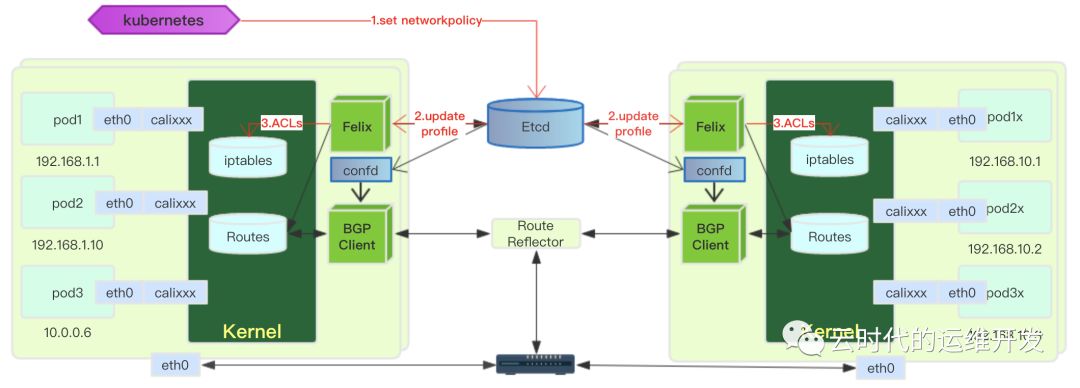

Calico架构及组件

Felix Calico的主要代理运行与每一个机器上,负责编程路由和ACL为该主机上的Endpoint提供所需连接

它主要完成以下任务:

接口管理 Felix将有关接口的信息编程到内核中,使内核正确处理该Endpoint发出的流量,它确保主机使用MAC响应来自每个工作负载的ARP请求,并为其管理的接口启用IP转发,它也监视出现和消失的接口,以确保适当时间应用这些接口编程

路由编程 Felix负责将主机上的Endpoints的路由编程到Linux内核FIB(转发信息库)中,确保发往到该主机Endpoints的数据包被相应地转发

ACL编程 Felix负责将ACL编程到Linux内核中,ACL确保只能在Endpoints之间发送有效流量,并确保Endpoints无法绕过Calico的安全措施

下发ACL规则 kubernetes设置网络策略 -> etcd存储网络策略并更新Felix -> Felix将ACLs写入iptables

状态报告 Felix负责提供有关网络健康状况的数据,报告配置其主机时的错误和问题,该数据将被写入etcd并对网络的其他组件和运营商可见

Orchestrator 每个云编排平台有单独的Orchestrator插件,插件的目的是将Calico更紧密地绑定到协调器中,允许用户管理Calico网络。优秀示例: Calico Neutron ML2机制驱动程序,该组件与Neutron的ML2插件集成,允许用户通过Neutron API调用来配置Calico网络,提供与Neutron无缝集成

orchestrator主要完成以下任务:

API Translation orchestrator拥有自己的一套用于管理网络的API,它的主要工作是将API转换为Calico的数据模型,然后将其存储在Calico的数据存储区

反馈 如有必要Orchestrator插件将提供从Calico网络到协调器的反馈,包括:提供有关Felix活力的信息; 如果网络设置失败,则将某些Endpoints标记为失败

Etcd Calico使用Etcd提供组件之间的通信,并作为一致性的数据存储,确保Calico始终可以构建准确的网络。根据Orchestrator插件,etcd可以是主数据存储,也可以是单独数据存储的轻量级镜像

etcd组件分布在整个deployment中,它被划分到两组计算机:核心集群和代理

它主要完成以下任务:

数据存储 etcd以分布式、一致性、容错的方式存储Calico网络的数据(对于至少三个etcd节点的集群)

通讯 让非etcd组件监视密钥空间中的某些点来确保他们看到已经进行的任何更改,从而允许他们及时响应这些更改,这允许将状态提交到数据库以使该状态被编程到网络中

BGP Client(BIDR) Calico部署在每一个节点上的BGP客户端(包括Felix允许的主机),作用是读取Felix程序插入内核及分布在数据中心周围的路由状态

它主要完成以下任务:

路由分配 当Felix将路由插入Linux内核FIB时,BGP客户端将接收它们并将它们分发到其他节点,确保有效地路由流量

BGP Route Reflector BGP路由反射器,一种可选的BGP路由反射器

它主要完成以下任务:

集中路由分配 当Calico BGP客户端通知从其FIB路由到路由反射器时,路由反射器会将这些路由通知给Deployment中的其他节点

Calico原理

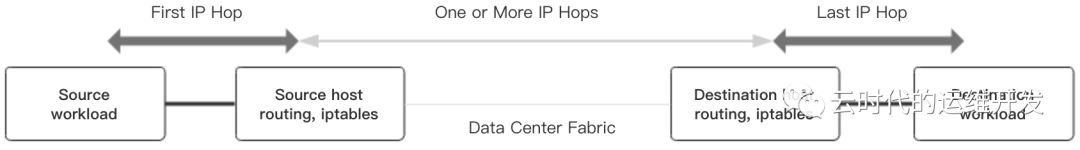

在Calico方法中,来自工作负载的源IP数据包被工作负载所在主机根据路由表和iptables基础设施进行路由及防火墙处理。对于发送数据包的工作负载,不管工作负载本身配置的下一跳路由是什么,Calico始终确保将工作负载所在主机作为下一跳MAC地址返回。对于目标工作负载最后一跳IP是目标工作负载所在主机到工作负载本身的数据包

Calico网络设计 Calico是纯三层网络,报文流向完全由规则控制,任何能够传输IP数据包的技术都可以用作Calico网络中的互连结构,即用于传输IP的标准工具(e.g. MPLS和以太网)可用于Calico网络

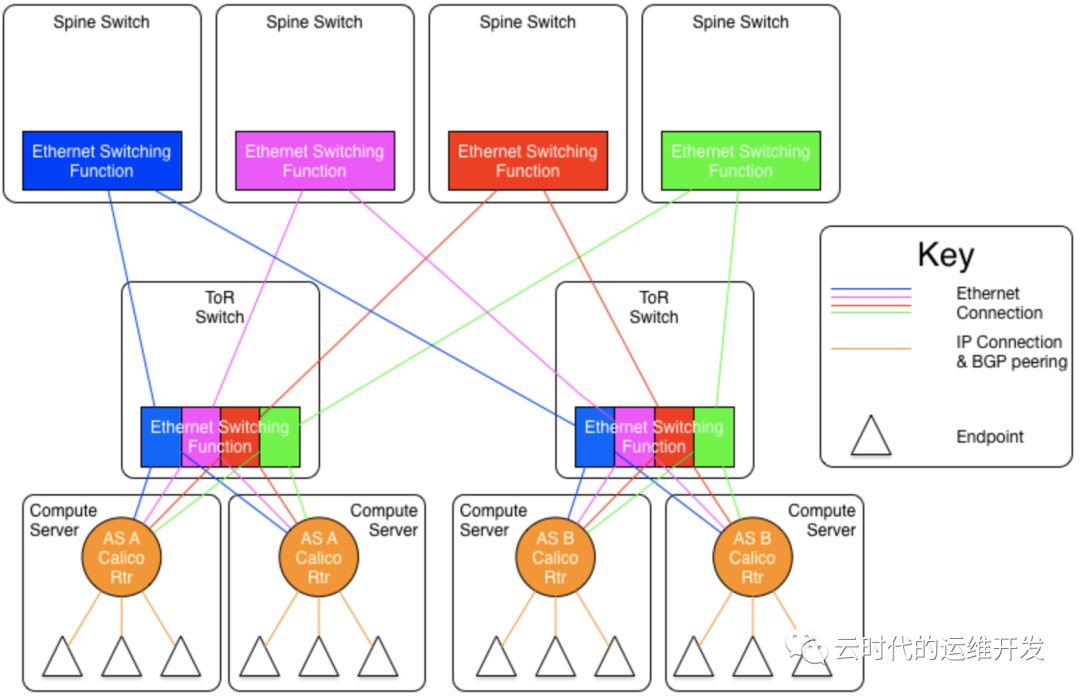

Calico网络互连结构

1.Ethernet interconnect fabric

图中设计四个二层网络形成fabric以保证链路可靠,每个Node同时接入四个二层网络,对应拥有四个不同网段的IP。在每个二层网络上Node与Node以RR模式建立BGP通信链路:一个Node作为RR,其余Node连接到作为RR的Node,整个网络中最终有四个RR,分别负责四个网络中的BGP

从Node上去访问另一个Node上的Endpoint时,有四条下一跳为不同网段的等价路由,根据ECMP协议,报文将会平均分配给这四个等价路由,提高可靠性同时增加网络吞吐能力

2.IP Fabric

如果底层网络是IP Fabric的方式,那么三层网络是可靠的,只需要部署一套Calico

Calico Over IP Fabrics给出两种BGP网络设计方案

a.As Per Rack 每个机架组成一个AS,每个Rack的TOR交换机与核心交换机组成一个AS

b.As Per Server 每个Node做为一个AS,TOR交换机组成一个transit AS

优化方案 " Downward Default Model ",它在上面几种组网方式上基础上,优化路由管理能减少需要记录的路由

上面三种方案每个Node、TOR及核心交换机都需要记录全网路由,优化后的Downward Default Model工作方式

- 每个Node向上(TOR)通告所有路由信息,TOR向下(Node)只通告一条默认路由

- 每个TOR向上(核心交换机)通告所有路由信息,核心交换机向下(TOR)只通告一条默认路由

- Node只知道本地路由

- TOR只知道接入到自己的所有Node上的路由

- 核心交换机知道所有路由信息

Kubernetes With Calico

Kubernetes安装Calico及calico.yaml文件解析

硬件配置(官方建议)

- AMD64处理器

- 2CPU

- 2GB RAM

- 10GB可用磁盘空间

- OS: RedHat Enterprise Linux 7.x+、CentOS 7.x+、Ubuntu 16.04+、Debian 9.x+

Calico的安装时机 网络配置是在初始化Kubernetes集群时完成

tips 关于Kubernetes详细安装步骤参考文章 Kubernetes系列--集群安装配置验证(上篇)

# kubeadm 初始化集群, --pod-network-cidr参数指定可分配给Endpoint IP的IP集合:[192.168.0.1,192.168.255.254] kubeadm init --pod-network-cidr 192.168.0.0/16 # *载下**安装 calico.yaml wget https://docs.projectcalico.org/v3.7/manifests/calico.yaml kubectl -f apply calico.yaml # console output configmap "calico-config" created customresourcedefinition.apiextensions.k8s.io "felixconfigurations.crd.projectcalico.org" created customresourcedefinition.apiextensions.k8s.io "ipamblocks.crd.projectcalico.org" created customresourcedefinition.apiextensions.k8s.io "blockaffinities.crd.projectcalico.org" created customresourcedefinition.apiextensions.k8s.io "ipamhandles.crd.projectcalico.org" created customresourcedefinition.apiextensions.k8s.io "bgppeers.crd.projectcalico.org" created customresourcedefinition.apiextensions.k8s.io "bgpconfigurations.crd.projectcalico.org" created customresourcedefinition.apiextensions.k8s.io "ippools.crd.projectcalico.org" created customresourcedefinition.apiextensions.k8s.io "hostendpoints.crd.projectcalico.org" created customresourcedefinition.apiextensions.k8s.io "clusterinformations.crd.projectcalico.org" created customresourcedefinition.apiextensions.k8s.io "globalnetworkpolicies.crd.projectcalico.org" created customresourcedefinition.apiextensions.k8s.io "globalnetworksets.crd.projectcalico.org" created customresourcedefinition.apiextensions.k8s.io "networksets.crd.projectcalico.org" created customresourcedefinition.apiextensions.k8s.io "networkpolicies.crd.projectcalico.org" created clusterrole.rbac.authorization.k8s.io "calico-kube-controllers" created clusterrolebinding.rbac.authorization.k8s.io "calico-kube-controllers" created clusterrole.rbac.authorization.k8s.io "calico-node" created clusterrolebinding.rbac.authorization.k8s.io "calico-node" created daemonset.extensions "calico-node" created serviceaccount "calico-node" created deployment.extensions "calico-kube-controllers" created serviceaccount "calico-kube-controllers" created

验证calico

# 1.检查集群中的节点状态: Ready kubectl get node ## NAME STATUS ROLES AGE VERSION master-node Ready master 6h50m v1.13.3 worker-node Ready <none> 5h21m v1.13.3 ------------------------------------------------ # 2.检查calico pod 状态: Running kubectl -n kube-system get pod ## NAME READY STATUS RESTARTS AGE calico-node-6dw59 2/2 Running 0 77d calico-node-smkv7 2/2 Running 8 77d

calico.yaml文件解析

# Source: calico/templates/calico-config.yaml

# This ConfigMap is used to configure a self-hosted Calico installation.

kind: ConfigMap

apiVersion: v1

metadata:

name: calico-config

namespace: kube-system

data:

# 数据存储类型: Typha(集群节点多于50个,建议使用); 也支持 etcd 存储

# Typha is disabled.集群规模节点数量少于50,可以默认不启用

typha_service_name: "none"

# Configure the backend to use.

calico_backend: "bird"

# Configure the MTU to use

veth_mtu: "1440"

# The CNI network configuration to install on each node. The special

# values in this config will be automatically populated.

cni_network_config: |-

{

"name": "k8s-pod-network",

"cniVersion": "0.3.0",

"plugins": [

{

"type": "calico",

"log_level": "info",

"datastore_type": "kubernetes",

"nodename": "__KUBERNETES_NODE_NAME__",

"mtu": __CNI_MTU__,

"ipam": {

"type": "calico-ipam"

},

"policy": {

"type": "k8s"

},

"kubernetes": {

"kubeconfig": "__KUBECONFIG_FILEPATH__"

}

},

{

"type": "portmap",

"snat": true,

"capabilities": {"portMappings": true}

}

]

}

介绍Calico中自定义资源类型: IPAMBlock,BGPPeer,IPPool,NetworkPolicy,etc.

# Source: calico/templates/kdd-crds.yaml apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition // 声明定义CRD metadata: name: felixconfigurations.crd.projectcalico.org // CRD名称 spec: scope: Cluster // CRD的作用域Cluster group: crd.projectcalico.org // 所属 API Group version: v1 names: kind: FelixConfiguration // CRD类型名称 plural: felixconfigurations singular: felixconfiguration --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: ipamblocks.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: IPAMBlock plural: ipamblocks singular: ipamblock --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: blockaffinities.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: BlockAffinity plural: blockaffinities singular: blockaffinity --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: ipamhandles.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: IPAMHandle plural: ipamhandles singular: ipamhandle --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: ipamconfigs.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: IPAMConfig plural: ipamconfigs singular: ipamconfig --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: bgppeers.crd.projectcalico.org spec: scope: Cluster // BGPPeer作用域也可以是Node group: crd.projectcalico.org version: v1 names: kind: BGPPeer plural: bgppeers singular: bgppeer --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: bgpconfigurations.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: BGPConfiguration plural: bgpconfigurations singular: bgpconfiguration --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: ippools.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: IPPool plural: ippools singular: ippool --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: hostendpoints.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: HostEndpoint plural: hostendpoints singular: hostendpoint --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: clusterinformations.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: ClusterInformation plural: clusterinformations singular: clusterinformation --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: globalnetworkpolicies.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: GlobalNetworkPolicy plural: globalnetworkpolicies singular: globalnetworkpolicy --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: globalnetworksets.crd.projectcalico.org spec: scope: Cluster group: crd.projectcalico.org version: v1 names: kind: GlobalNetworkSet plural: globalnetworksets singular: globalnetworkset --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: networkpolicies.crd.projectcalico.org spec: scope: Namespaced group: crd.projectcalico.org version: v1 names: kind: NetworkPolicy plural: networkpolicies singular: networkpolicy --- apiVersion: apiextensions.k8s.io/v1beta1 kind: CustomResourceDefinition metadata: name: networksets.crd.projectcalico.org spec: scope: Namespaced group: crd.projectcalico.org version: v1 names: kind: NetworkSet plural: networksets singular: networkset ---

Calico中ServiceAccount、角色及权限配置

# Source: calico/templates/rbac.yaml # Include a clusterrole for the kube-controllers component, # and bind it to the calico-kube-controllers serviceaccount. kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1beta1 metadata: name: calico-kube-controllers rules: # Nodes are watched to monitor for deletions. - apiGroups: [""] resources: - nodes verbs: - watch - list - get # Pods are queried to check for existence. - apiGroups: [""] resources: - pods verbs: - get # IPAM resources are manipulated when nodes are deleted. - apiGroups: ["crd.projectcalico.org"] resources: - ippools verbs: - list - apiGroups: ["crd.projectcalico.org"] resources: - blockaffinities - ipamblocks - ipamhandles verbs: - get - list - create - update - delete # Needs access to update clusterinformations. - apiGroups: ["crd.projectcalico.org"] resources: - clusterinformations verbs: - get - create - update --- kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1beta1 metadata: name: calico-kube-controllers roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: calico-kube-controllers subjects: - kind: ServiceAccount name: calico-kube-controllers namespace: kube-system --- # Include a clusterrole for the calico-node DaemonSet, # and bind it to the calico-node serviceaccount. kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1beta1 metadata: name: calico-node rules: # The CNI plugin needs to get pods, nodes, and namespaces. - apiGroups: [""] resources: - pods - nodes - namespaces verbs: - get - apiGroups: [""] resources: - endpoints - services verbs: # Used to discover service IPs for advertisement. - watch - list # Used to discover Typhas. - get - apiGroups: [""] resources: - nodes/status verbs: # Needed for clearing NodeNetworkUnavailable flag. - patch # Calico stores some configuration information in node annotations. - update # Watch for changes to Kubernetes NetworkPolicies. - apiGroups: ["networking.k8s.io"] resources: - networkpolicies verbs: - watch - list # Used by Calico for policy information. - apiGroups: [""] resources: - pods - namespaces - serviceaccounts verbs: - list - watch # The CNI plugin patches pods/status. - apiGroups: [""] resources: - pods/status verbs: - patch # Calico monitors various CRDs for config. - apiGroups: ["crd.projectcalico.org"] resources: - globalfelixconfigs - felixconfigurations - bgppeers - globalbgpconfigs - bgpconfigurations - ippools - ipamblocks - globalnetworkpolicies - globalnetworksets - networkpolicies - networksets - clusterinformations - hostendpoints verbs: - get - list - watch # Calico must create and update some CRDs on startup. - apiGroups: ["crd.projectcalico.org"] resources: - ippools - felixconfigurations - clusterinformations verbs: - create - update # Calico stores some configuration information on the node. - apiGroups: [""] resources: - nodes verbs: - get - list - watch # These permissions are only requried for upgrade from v2.6, and can # be removed after upgrade or on fresh installations. - apiGroups: ["crd.projectcalico.org"] resources: - bgpconfigurations - bgppeers verbs: - create - update # These permissions are required for Calico CNI to perform IPAM allocations. - apiGroups: ["crd.projectcalico.org"] resources: - blockaffinities - ipamblocks - ipamhandles verbs: - get - list - create - update - delete - apiGroups: ["crd.projectcalico.org"] resources: - ipamconfigs verbs: - get # Block affinities must also be watchable by confd for route aggregation. - apiGroups: ["crd.projectcalico.org"] resources: - blockaffinities verbs: - watch # The Calico IPAM migration needs to get daemonsets. These permissions can be # removed if not upgrading from an installation using host-local IPAM. - apiGroups: ["apps"] resources: - daemonsets verbs: - get --- apiVersion: rbac.authorization.k8s.io/v1beta1 kind: ClusterRoleBinding metadata: name: calico-node roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: calico-node subjects: - kind: ServiceAccount name: calico-node namespace: kube-system

Calico以Daemonset方式部署Calico CNI插件和网络配置

# Source: calico/templates/calico-node.yaml # This manifest installs the calico-node container, as well # as the CNI plugins and network config on # each master and worker node in a Kubernetes cluster. kind: DaemonSet apiVersion: extensions/v1beta1 metadata: name: calico-node namespace: kube-system labels: k8s-app: calico-node spec: selector: matchLabels: k8s-app: calico-node updateStrategy: type: RollingUpdate rollingUpdate: maxUnavailable: 1 template: metadata: labels: k8s-app: calico-node annotations: # This, along with the CriticalAddonsOnly toleration below, # marks the pod as a critical add-on, ensuring it gets # priority scheduling and that its resources are reserved # if it ever gets evicted. scheduler.alpha.kubernetes.io/critical-pod: '' spec: nodeSelector: beta.kubernetes.io/os: linux hostNetwork: true tolerations: # Make sure calico-node gets scheduled on all nodes. - effect: NoSchedule operator: Exists # Mark the pod as a critical add-on for rescheduling. - key: CriticalAddonsOnly operator: Exists - effect: NoExecute operator: Exists serviceAccountName: calico-node # Minimize downtime during a rolling upgrade or deletion; tell Kubernetes to do a "force # deletion": https://kubernetes.io/docs/concepts/workloads/pods/pod/#termination-of-pods. terminationGracePeriodSeconds: 0 initContainers: # This container performs upgrade from host-local IPAM to calico-ipam. # It can be deleted if this is a fresh installation, or if you have already # upgraded to use calico-ipam. - name: upgrade-ipam image: calico/cni:v3.7.2 command: ["/opt/cni/bin/calico-ipam", "-upgrade"] env: - name: KUBERNETES_NODE_NAME valueFrom: fieldRef: fieldPath: spec.nodeName - name: CALICO_NETWORKING_BACKEND valueFrom: configMapKeyRef: name: calico-config key: calico_backend volumeMounts: - mountPath: /var/lib/cni/networks name: host-local-net-dir - mountPath: /host/opt/cni/bin name: cni-bin-dir # This container installs the CNI binaries # and CNI network config file on each node. - name: install-cni image: calico/cni:v3.7.2 command: ["/install-cni.sh"] env: # Name of the CNI config file to create. - name: CNI_CONF_NAME value: "10-calico.conflist" # The CNI network config to install on each node. - name: CNI_NETWORK_CONFIG valueFrom: configMapKeyRef: name: calico-config key: cni_network_config # Set the hostname based on the k8s node name. - name: KUBERNETES_NODE_NAME valueFrom: fieldRef: fieldPath: spec.nodeName # CNI MTU Config variable - name: CNI_MTU valueFrom: configMapKeyRef: name: calico-config key: veth_mtu # Prevents the container from sleeping forever. - name: SLEEP value: "false" volumeMounts: - mountPath: /host/opt/cni/bin name: cni-bin-dir - mountPath: /host/etc/cni/net.d name: cni-net-dir containers: # Runs calico-node container on each Kubernetes node. This # container programs network policy and routes on each # host. - name: calico-node image: calico/node:v3.7.2 env: # Use Kubernetes API as the backing datastore. - name: DATASTORE_TYPE value: "kubernetes" # Wait for the datastore. - name: WAIT_FOR_DATASTORE value: "true" # Set based on the k8s node name. - name: NODENAME valueFrom: fieldRef: fieldPath: spec.nodeName # Choose the backend to use. - name: CALICO_NETWORKING_BACKEND valueFrom: configMapKeyRef: name: calico-config key: calico_backend # Cluster type to identify the deployment type - name: CLUSTER_TYPE value: "k8s,bgp" # Auto-detect the BGP IP address. - name: IP value: "autodetect" # Enable IPIP - name: CALICO_IPV4POOL_IPIP value: "Always" # Set MTU for tunnel device used if ipip is enabled - name: FELIX_IPINIPMTU valueFrom: configMapKeyRef: name: calico-config key: veth_mtu # The default IPv4 pool to create on startup if none exists. Pod IPs will be # chosen from this range. Changing this value after installation will have # no effect. This should fall within `--cluster-cidr`. - name: CALICO_IPV4POOL_CIDR value: "192.168.0.0/16" # Disable file logging so `kubectl logs` works. - name: CALICO_DISABLE_FILE_LOGGING value: "true" # Set Felix endpoint to host default action to ACCEPT. - name: FELIX_DEFAULTENDPOINTTOHOSTACTION value: "ACCEPT" # Disable IPv6 on Kubernetes. - name: FELIX_IPV6SUPPORT value: "false" # Set Felix logging to "info" - name: FELIX_LOGSEVERITYSCREEN value: "info" - name: FELIX_HEALTHENABLED value: "true" securityContext: privileged: true resources: requests: cpu: 250m livenessProbe: httpGet: path: /liveness port: 9099 host: localhost periodSeconds: 10 initialDelaySeconds: 10 failureThreshold: 6 readinessProbe: exec: command: - /bin/calico-node - -bird-ready - -felix-ready periodSeconds: 10 volumeMounts: - mountPath: /lib/modules name: lib-modules readOnly: true - mountPath: /run/xtables.lock name: xtables-lock readOnly: false - mountPath: /var/run/calico name: var-run-calico readOnly: false - mountPath: /var/lib/calico name: var-lib-calico readOnly: false volumes: # Used by calico-node. - name: lib-modules hostPath: path: /lib/modules - name: var-run-calico hostPath: path: /var/run/calico - name: var-lib-calico hostPath: path: /var/lib/calico - name: xtables-lock hostPath: path: /run/xtables.lock type: FileOrCreate # Used to install CNI. - name: cni-bin-dir hostPath: path: /opt/cni/bin - name: cni-net-dir hostPath: path: /etc/cni/net.d # Mount in the directory for host-local IPAM allocations. This is # used when upgrading from host-local to calico-ipam, and can be removed # if not using the upgrade-ipam init container. - name: host-local-net-dir hostPath: path: /var/lib/cni/networks --- apiVersion: v1 kind: ServiceAccount metadata: name: calico-node namespace: kube-system

Calico的Controller部署

# Source: calico/templates/calico-kube-controllers.yaml # See https://github.com/projectcalico/kube-controllers apiVersion: extensions/v1beta1 kind: Deployment metadata: name: calico-kube-controllers namespace: kube-system labels: k8s-app: calico-kube-controllers annotations: scheduler.alpha.kubernetes.io/critical-pod: '' spec: # The controller can only have a single active instance. replicas: 1 strategy: type: Recreate template: metadata: name: calico-kube-controllers namespace: kube-system labels: k8s-app: calico-kube-controllers spec: nodeSelector: beta.kubernetes.io/os: linux tolerations: # Mark the pod as a critical add-on for rescheduling. - key: CriticalAddonsOnly operator: Exists - key: node-role.kubernetes.io/master effect: NoSchedule serviceAccountName: calico-kube-controllers containers: - name: calico-kube-controllers image: calico/kube-controllers:v3.7.2 env: # Choose which controllers to run. - name: ENABLED_CONTROLLERS value: node - name: DATASTORE_TYPE value: kubernetes readinessProbe: exec: command: - /usr/bin/check-status - -r --- apiVersion: v1 kind: ServiceAccount metadata: name: calico-kube-controllers namespace: kube-system

小结

网络是实现大规模容器集群的基础,针对Kubernetes容器集群需要解决四个通信问题:Container to Container,Pod to Pod,Pod with Service,External with Service

Kubernetes抽象出网络模型,各网络解决方案基于SDN实现CNI插件,Calico是其解决方案之一,它为集群中容器工作负载提供一个安全的网络连接,以Calico为例对其特性及安装方法做了简要介绍

下一篇 kubernetes集群安全

更多精彩内容,欢迎关注微信公众号`云时代的运维开发`