本文由DCD授权翻译并在DeepKnowledge平台发表。

随着AI工作负载将决定未来的发展走向,某些设备硬件将取代CPU而封王。

With AI workloads set to dominate the future, there's an uncertainty around the hardware that’s aiming to dethrone the GPU

January 24, 2020

By Max Smolaks

This feature appeared in the January issue of DCD Magazine. Subscribe for free today.

1971年,当时还只是随机存取存储器RAM制造商的英特尔正式发布了其首款单芯片中央处理器4004,由此开启了CPU在计算芯片领域近50年的主导地位。

In 1971, Intel, then a manufacturer of random access memory, officially released the 4004, its first single-chip central processing unit, thus kickstarting nearly 50 years of CPU dominance in computing.

1989年,Tim Berners-Lee当时在欧洲核子研究中心CERN工作,使用了基于摩托罗拉68030处理器设计的电脑NeXT,创建了第一个网站,这台电脑成了世界上第一台Web服务器。

In 1989, while working at CERN, Tim Berners-Lee used a NeXT computer, designed around the Motorola 68030 CPU, to launch the first website, making the machine used the world’s first web server.

CPU是典型服务器中最昂贵、最先进、最耗电的部件:它们成为数字时代跳动的心脏,半导体成为衡量人类进步的基准。

CPUs were the most expensive, the most scientifically advanced, and the most power-hungry parts of a typical server: they became the beating hearts of the digital age, and semiconductors turned into the benchmark for our species' advancement.

英特尔的统治

Intel's domination

也许很少有人知道香农极限或兰道尔原理,但每个人即使一生中从未装过一颗处理器,也都知道摩尔定律的存在。CPU已经成为流行文化的一部分,如今,英特尔统治着这个市场,凭借其庞大的研发预算和强大的制造设施(俗称“晶圆厂”)支撑其近乎垄断的市场地位。

Few might know about the Shannon limit or Landauer's principle, but everyone knows about the existence of Moore’s Law, even if they have never seated a processor in their life. CPUs have entered popular culture and, today, Intel rules this market, with a near-monopoly supported by its massive R&D budgets and extensive fabrication facilities, better known as ‘fabs.’

在过去的两三年里,出现一些不同以往的景象:数据中心内开始容纳越来越多的与CPU不同类的处理器。

But in the past two or three years, something strange has been happening: data centers started housing more and more processors that weren’t CPUs.

CPU的出现带来了不同,人们发现,这些大规模并行处理器不仅可以用来渲染视频游戏和挖币,还可以用来训练机器学习——芯片制造商敏锐地抓住了这一新的收入增长来源。

It began with the arrival of GPUs. It turned out that these massively parallel processors weren’t just useful for rendering video games and mining magical coins, but also for training machines to learn - and chipmakers grabbed onto this new revenue stream for dear life.

“简单地说,过去40到50年来承载行业发展的传统体系架构已完全不能满足今天所需的数据生成和数据处理水平。”

“Simply put, the traditional architecture that’s been carrying the industry for the last 40 to 50 years is totally inadequate for the level of data generation and data processing that’s needed today.”

早在8月份,英伟达(Nvidia)首席执行官黄仁勋(Jen Hsun'Jensen'Huang)就称人工智能技术是“我们这个时代最强大的单一力量”。在财报电话会议上,他指出目前全球有4000多家AI初创企业。他还特别举了一些企业应用程序的例子,这些应用程序可能需要在CPU上运行数周才能完成,但在GPU上仅需数小时。

Back in August, Nvidia’s CEO Jen-Hsun ‘Jensen’ Huang called AI technologies the “single most powerful force of our time.” During the earnings call, he noted that there were currently more than 4,000 AI start-ups around the world. He also touted examples of enterprise apps that could take weeks to run on CPUs, but just hours on GPUs.

一些硅芯片设计厂商见证了CPU变为抢手货的成功事实,他们认为:我们可以做得更好。Xilinx就是其中之一,该公司是编程逻辑设备方面的资深玩家。作为定制硅晶片的先驱,改公司在1985年发明了第一个FPGA(现场可编程门阵列)。

A handful of silicon designers looked at the success of GPUs as they were flying off the shelves, and thought: we can do better. Like Xilinx, a venerable specialist in programming logic devices. The granddaddy of custom silicon, it is credited with inventing the first field-programmable gate arrays (FPGAs) back in 1985.

FPGA的应用范围从电信到医学成像、硬件仿真,当然还有机器学习工作负载。而Xilinx并不想在新的应用案例中和英伟达一样延用旧款芯片,在2018年,Xilinx宣布推出自适应计算加速平台(ACAP)——一种专门为人工智能设计的全新芯片架构。

Applications for FPGAs range from telecoms to medical imaging, hardware emulation, and of course, machine learning workloads. But Xilinx wasn’t happy with adopting old chips for new use cases, the way Nvidia had done, and in 2018, it announced the adaptive compute acceleration platform (ACAP) - a brand new chip architecture designed specifically for AI.

“数据中心是被颠覆的几个市场之一,“ Xilinx首席执行官Victor Peng在最近于阿姆斯特丹举行的开发者论坛上发表主旨演讲时说。“我们都知道,每个月都会有ZB级的数据被生成,其中大多数是非结构化的。处理所有这些数据需要庞大的算力。另一方面,也面临一些挑战,比如摩尔定律的终结,技术能力是个大问题。

“Data centers are one of several markets being disrupted,” CEO Victor Peng said in a keynote at the recent Xilinx Developer Forum in Amsterdam. “We all hear about the fact that there's zettabytes of data being generated every single month, most of them unstructured. And it takes a tremendous amount of compute capability to process all that data. And on the other side of things, you have challenges like the end of Moore's Law, and power being a problem.

“由于这些原因,John Hennessy和Dave Patterson这两位计算机科学界的大佬最近都表示,我们正进入一个全新架构的黄金时代。”

"Because of all these reasons, John Hennessy and Dave Patterson - two icons in the computer science world - both recently stated that we were entering a new golden age of architectural development."

他接着说:“简单地说,过去40到50年来承载这个行业的传统架构完全无法满足今天所需的数据生成和数据处理水平。”

He continued: “Simply put, the traditional architecture that’s been carrying the industry for the last 40 to 50 years is totally inadequate for the level of data generation and data processing that’s needed today.”

“重要的是一定要记住,这真的只是在人工智能的早期,” Victor Peng随后告诉DCD。“人们越来越觉得,卷积神经网络和深层神经网络并不是正确的方向。这很像黑匣子-你不知道发生了什么,你可能会得到非常错误的结果,这让人们感到不安。”

“It is important to remember that it’s really, really early in AI,” Peng later told DCD. “There’s a growing feeling that convolutional and deep neural networks aren’t the right approach. This whole black box thing - where you don’t know what’s going on and you can get wildly wrong results, is a little disconcerting for folks.”

新途径

A new approach

Xilinx数据中心集团负责人Salil Raje警告称:“如果你仍然押注于旧有的硬件和软件,你将错失新周期。你如果想利用我们提供的适应能力,现在就把你的需求匹配,才能长治久安。如果你还在做ASIC,那是在孤注一掷。”

Salil Raje, head of the Xilinx data center group, warned: “If you’re betting on old hardware and software, you are going to have wasted cycles. You want to use our adaptability and map your requirements to it right now, and then longevity. When you’re doing ASICs, you’re making a big bet.”

“这与基于成熟技术所制造芯片面临的挑战不同。如果你清晰知道面临的挑战,你只需要比其他人设计的更好就行。”

“It’s not like building chips for a mature technology challenge. If you know the challenge, you just have to engineer better than other people."

另一家引起轰动的是英国芯片设计厂商Graphcore,它当时迅速成为最令人瞩目的硬件初创公司之一。

Another company making waves is British chip designer Graphcore, quickly becoming one of the most exciting hardware start-ups of the moment.

Graphcore的GC2 IPU拥有世界上最高数量的晶体管,这是一款实实在在已经向客户发货的设备——晶体管数量高达236亿个。这仍不足以满足摩尔定律的要求,但它比英伟达的V100 GPU或AMD的32核Epyc CPU的晶体管数量要多得多。

Graphcore’s GC2 IPU has the world’s highest transistor count for a device that’s actually shipping to customers - 23,600,000,000 of them. That’s not nearly enough to keep up with the demands of Moore’s Law - but it’s a whole lot more transistor gates than in Nvidia’s V100 GPU, or AMD’s monstrous 32-core Epyc CPU.

Graphcore首席执行官Nigel Toon 在2019年8月告诉我们:“事实上,人们并不知道在不久的将来,他们到底需要什么样的硬件来实现人工智能”。“这与基于成熟技术所制造芯片面临的挑战不同。如果你清楚知道面临的挑战,你只需要比其他人设计的更好就行。”

“The honest truth is, people don’t know what sort of hardware they are going to need for AI in the near future,” Nigel Toon, the CEO of Graphcore, told us in August. “It’s not like building chips for a mature technology challenge. If you know the challenge, you just have to engineer better than other people.

“工作负载完全相同,神经网络和令人感兴趣的构架每年都在变化。这也是为什么我们要成立一个研究小组的目的,有点儿像远程雷达。”

“The workload is very different, neural networks and other structures of interest change from year to year. That’s why we have a research group, it’s sort of a long-distance radar.

“有过几次大规模的技术转变。人工智能作为一种工作负载就是其一——我们不再需要编程来告诉机器该做什么,而是编写程序来告诉机器该如何学习,然后机器从数据中学习。所以你的程序变得有点入“道”了,曾经我们全行业都在争论如何在计算机中表示数字。从1980年起就再没人讨论,因为已经毫无意义了。

"There are several massive technology shifts. One is AI as a workload - we’re not writing programs to tell a machine what to do anymore, we’re writing programs that tell a machine how to learn, and then the machine learns from data. So your programming has gone kind of ‘meta.’ We’re even having arguments across the industry about the way to represent numbers in computers. That hasn’t happened since 1980.

“第二次技术转变是传统意义上硅晶片规模上的终结。我们需要百万倍的计算能力,但我们无法从硅晶片进一步缩减电路尺寸提高集成密度提升中得到。所以我们必须学会如何提高硅晶片的效率,以及如何在更大的系统中构建更大量的芯片。

“The second technology shift is the end of traditional scaling of silicon. We need a million times more compute power, but we’re not going to get it from silicon shrinking. So we’ve got to be able to learn how to be more efficient in the silicon, and also how to build lots of chips into bigger systems.

“第三次技术转变的事实将是:在硅晶片规模扩展走到头时,满足这种计算要求的唯一方法是构建大规模并行计算机,幸运的是,这是可能的,因为工作负载中存在大量的并行性。”

“The third technology shift is the fact that the only way of satisfying this compute requirement at the end of silicon scaling - and fortunately, it is possible because the workload exposes lots of parallelism - is to build massively parallel computers.”

雄心壮志成就了Toon的今天,他希望在未来几年里拥有“几千名员工”,并与GPU及各种衍生版本一试高下。

Toon is nothing if not ambitious: he hopes to grow “a couple of thousand employees” over the next few years, and take the fight to GPUs, and their progenitor.

“深度学习有着独特的、庞大且不断增长的计算需求,这些需求与GPU这样的传统机器并不匹配,GPU本源上是为其他工作而设计的。”

“Deep learning has unique, massive, and growing computational requirements which are not well-matched by legacy machines like GPUs, which were fundamentally designed for other work”

Cerebras

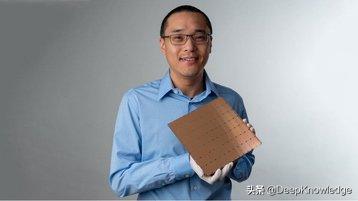

美国初创企业Cerebras 2019年8月份惊艳亮相,宣布推出了一款尺寸近8.5×8.5英寸的巨型芯片,含40万个内核,全部都是专为深度学习经过优化处理的,同时还配备了高达18GB的芯片内存。

Then there’s Cerebras, the American start-up that surprised everyone in August by announcing a mammoth chip measuring nearly 8.5 by 8.5 inches, and featuring 400,000 cores, all optimized for deep learning, accompanied by a whopping 18GB of on-chip memory.

“深度学习有着独特的、庞大且不断增长的计算需求,这些需求与GPU等传统机器不太匹配,而GPU本源上是为其他工作而设计的,”Cerebras总监Andy Hock博士说到。

“Deep learning has unique, massive, and growing computational requirements which are not well-matched by legacy machines like GPUs, which were fundamentally designed for other work,” Dr. Andy Hock, Cerebras director, said.

华为一如既往地坚持走自己的路:这家陷入困境的中国厂商多年来一直通过其子公司海思(HiSilicon)生产专有芯片,海思最初是为华为各种的网络设备生产芯片,最近则为其智能手机供应芯片。

Huawei, as always, is going its own way: the embattled Chinese vendor has been churning out proprietary chips for years through its HiSilicon subsidiary, originally for its wide array of networking equipment, more recently for its smartphones.

下一步,华为将用Ascend系列产品颠覆人工智能硬件市场,包括从微型推理设备到Ascend 910的所有产品,华为声称Ascend 910是世界上最强大的人工智能处理器。大量集成应用,就会拥有Atlas 900---世界上速度最快的人工智能训练集群,目前供中国天文研究人员使用。

For its next trick, Huawei is disrupting the AI hardware market with the Ascend line - including everything from tiny inference devices to Ascend 910, which it claimed is the most powerful AI processor in the world. Add a bunch of these together, and you get the Atlas 900, the world's fastest AI training cluster, currently used by Chinese astronomy researchers.

当然,如果没有英特尔的Nervana,这个清单是不完整的,尽管它进入人工智能领域有些迟。就像Xilinx和Graphcore一样,Nervana的研发团队相信未来的人工智能工作负载将需要专门的芯片,从头开始构建以支持机器学习,而不是把标准芯片用于此目的。

And of course, the list wouldn’t be complete without Intel’s Nervana, the somewhat late arrival to the AI scene. Just like Xilinx and Graphcore, Nervana believes that AI workloads of the future will require specialized chips, built from the ground up to support machine learning, and not just standard chips adopted for this purpose.

Xilinx的Salil Raje对DCD说:“人工智能仍处于新生和萌芽期,它将不断变化。”

“AI is very new and nascent, and it’s going to keep changing,” Xilinx’s Salil Raje told DCD.

“市场将发生变化,在技术、创新、研究方面——只需要一个博士生,就可以再次彻底改变这一领域,那时目前这些芯片都变得一无是处。人们都在期待那篇颠覆性的研究论文出现。”

“The market is going to change, the technology, the innovation, the research - all it takes is one PhD student, to completely revolutionize the field all over again, and then all of these chips become useless. It’s waiting for that one research paper.”

END

翻译:

程鑫鑫 中国电信山西分公司云业务中心 IDC客户经理 DKV(Deep Knowledge Volunteer)计划白金成员

中文校对: Eric

排版编辑:Amy

原文出处:DCD

关于DCD

DCD是一家全球性的数据中心行业媒体和咨询机构。我们通过在自身运营的全球性新闻网站及杂志刊物上定期发布最新行业资讯,举办全球性的线上及线下峰会活动,面向个人授予技能培训证书,颁发企业认证及组织全球性的行业项目评奖,积极推动着行业的发展。

公众号声明

关于版权:

本文并非原文官方的中文版本,仅供国内读者学习参考,不得用于任何商业用途,文章内容请以英文原版为准。中文版未经公众号DeepKnowledge书面授权,请勿转载。

关于Deep Knowledge Volunteer(DKV)计划:

DKV学习小组创立于2017年,您将以个人名义成为Deep Knowledge翻译志愿者,对国外数据中心的技术文章进行中文翻译,并通过微信公众号DeepKnowledge进行分享。

如您希望扩大数据中心知识面、学习全球最佳实践、锻炼英语阅读能力,加入DKV学习交流小组是您不二之选。欢迎通过邮件垂询dkv@dcinfra.com或直接给公众号发送消息,期待更多新同学加入我们。

扫码关注

获取更多数据中心资讯

公众号 : DeepKnowledge

头条号:DeepKnowledge

新浪微博:DeepKnowledge