本文主要介绍如何使用Pytorch进行神经网络的搭建,从而完成分类的任务。

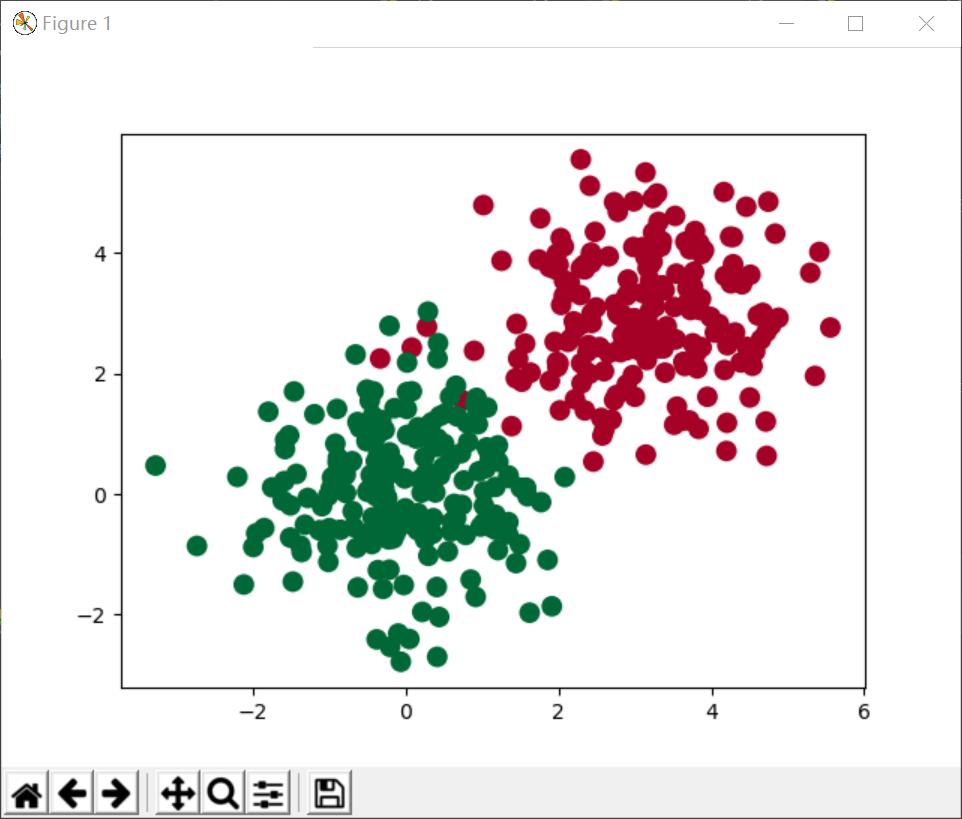

原始待分类的数据如下

原始分类数据

我们采用批训练的方式,共训练10代,每一批次为30个数据。这次我们没有采用继承的方式来搭建网络,而直接使用torch.nn.Sequential来搭建网络。

代码如下:

import torch

import torch.nn.functional as F

import torch.utils.data as Data

import matplotlib.pyplot as plt

# 假数据

n_data = torch.ones(200, 2) # 数据的基本形态

x0 = torch.normal(3*n_data, 1) # 类型0 x data (tensor), shape=(100, 2)

y0 = torch.zeros(200) # 类型0 y data (tensor), shape=(100, )

x1 = torch.normal(1*n_data, 1) # 类型1 x data (tensor), shape=(100, 1)

y1 = torch.ones(200) # 类型1 y data (tensor), shape=(100, )

# 注意 x, y 数据的数据形式是一定要像下面一样 (torch.cat 是在合并数据)

x = torch.cat((x0, x1), 0).type(torch.FloatTensor) # FloatTensor = 32-bit floating

y = torch.cat((y0, y1), ).type(torch.LongTensor) # LongTensor = 64-bit integer

net=torch.nn.Sequential(

torch.nn.Linear(2, 10),

torch.nn.ReLU(),

torch.nn.Linear(10, 2)

)

BATCH_SIZE=30

torch_dataset = Data.TensorDataset(x, y)

loader = Data.DataLoader(

dataset=torch_dataset, # torch TensorDataset format

batch_size=BATCH_SIZE, # mini batch size

shuffle=True, # random shuffle for training

)

optimizer = torch.optim.SGD(net.parameters(), lr=0.02)

loss_func = torch.nn.CrossEntropyLoss() # the target label is NOT an one-hotted

plt.ion() # something about plotting

for epoch in range(10): # train entire dataset 3 times

for step, (batch_x, batch_y) in enumerate(loader): # for each training step

out = net(batch_x) # input x and predict based on x

loss = loss_func(out, batch_y) # must be (1. nn output, 2. target), the target label is NOT one-hotted

optimizer.zero_grad() # clear gradients for next train

loss.backward() # backpropagation, compute gradients

optimizer.step() # apply gradient

# plot and show learning process

plt.cla()

out=net(x)

prediction = torch.max(out, 1)[1]

pred_y = prediction.data.numpy()

target_y = y.data.numpy()

plt.scatter(x.data.numpy()[:, 0], x.data.numpy()[:, 1], c=pred_y, s=100, lw=0, cmap='RdYlGn')

accuracy = float((pred_y == target_y).astype(int).sum()) / float(target_y.size)

plt.text(1.5, 3, 'Accuracy=%.2f' % accuracy, fontdict={'size': 20, 'color': 'red'})

plt.pause(0.1)

plt.ioff()

plt.show()

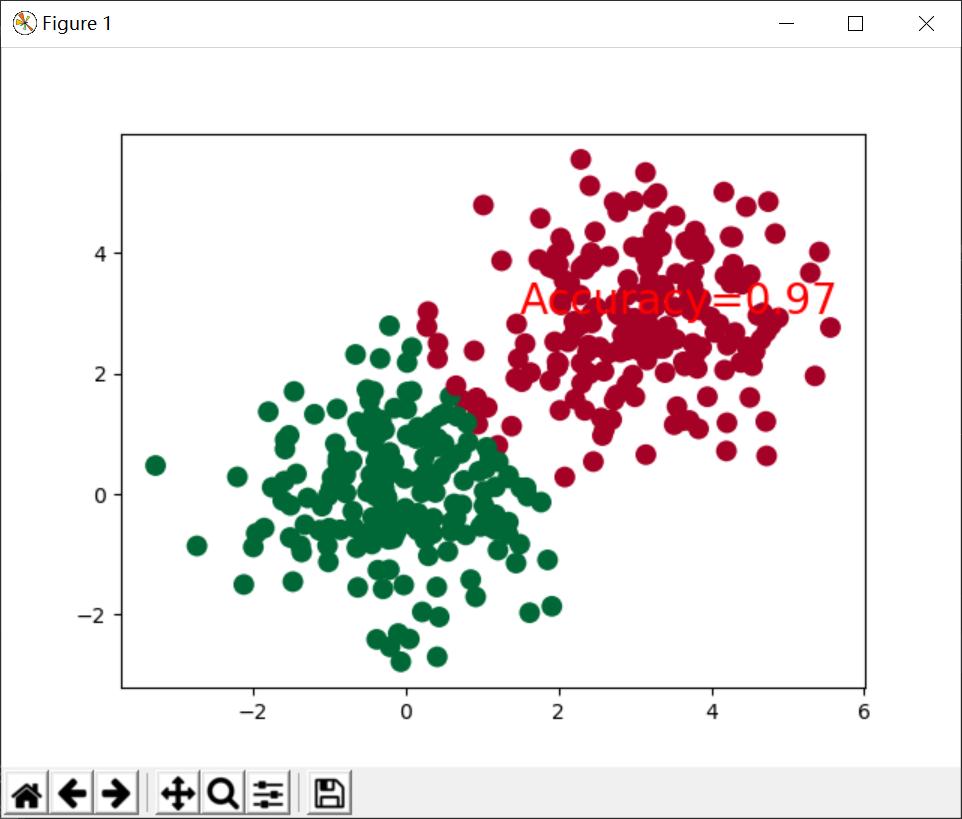

使用单层网络的训练结果:

单层神经网络

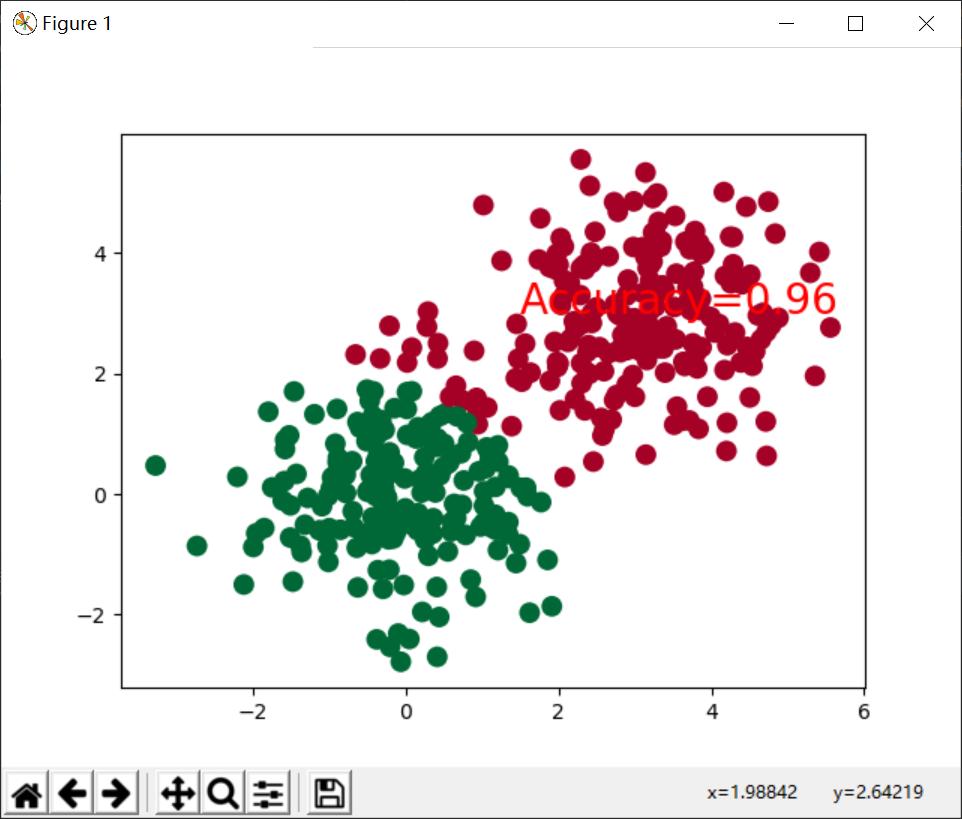

双层神经网络

可以看到拟合这样简单的分类任务,网络层数对预测的结果影响不大。但是当我们进行多特征分类任务时,就需要增加网络层数。