docker下安装hadoop集群,按照如下步骤,可快速安装并启动hadoop集群。

准备如下安装包

- hadoop-3.2.1.tar.gz

- jdk1.8.tar.gz

hadoop启动脚本

- hadoop-master.sh

#!/bin/sh

HADOOP_HOME=/usr/local/hadoop-3.2.1

/usr/sbin/sshd

cd ${HADOOP_HOME}

# 首次运行需要执行初始化,之后不需要

${HADOOP_HOME}/bin/hdfs namenode -format

${HADOOP_HOME}/sbin/start-dfs.sh

${HADOOP_HOME}/sbin/start-yarn.sh

tail -f /dev/null

- hadoop-slave.sh

#!/bin/sh

HADOOP_HOME=/usr/local/hadoop-3.2.1

/usr/sbin/sshd

cd ${HADOOP_HOME}

${HADOOP_HOME}/sbin/start-dfs.sh

${HADOOP_HOME}/sbin/start-yarn.sh

tail -f /dev/null

- hadoop-history.sh

#!/bin/sh

HADOOP_HOME=/usr/local/hadoop-3.2.1

/usr/sbin/sshd

cd ${HADOOP_HOME}

${HADOOP_HOME}/sbin/start-dfs.sh

${HADOOP_HOME}/sbin/start-yarn.sh

${HADOOP_HOME}/mr-jobhistory-daemon.sh start historyserver

tail -f /dev/null

docker文件

- Dockerfile文件

FROM centos:latest

ARG tar_file

ARG tar_name

ADD ${tar_file}.tar.gz /usr/local/

ADD hadoop-master.sh /opt/

ADD hadoop-slave.sh /opt/

ADD hadoop-history.sh /opt/

ADD jdk1.8.tar.gz /usr/local/

RUN chmod a+x /opt/hadoop-master.sh /opt/hadoop-slave.sh /opt/hadoop-history.sh \

&& yum install -y passwd openssl openssh-server openssh-clients lsof vim which sudo \

&& /bin/cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime && echo 'Asia/Shanghai' > /etc/timezone \

&& ssh-keygen -t rsa -P '' -f /root/.ssh/id_rsa \

&& cat /root/.ssh/id_rsa.pub >> /root/.ssh/authorized_keys \

&& ssh-keygen -t dsa -f /etc/ssh/ssh_host_dsa_key \

&& ssh-keygen -t rsa -f /etc/ssh/ssh_host_rsa_key \

&& ssh-keygen -t dsa -f /etc/ssh/ssh_host_ecdsa_key \

&& ssh-keygen -t rsa -f /etc/ssh/ssh_host_ed25519_key \

&& chmod 700 /root/.ssh/ \

&& chmod 600 /root/.ssh/authorized_keys \

&& cd /usr/local/${tar_name} \

&& mkdir -p tmp namenode datanode

ENV HDFS_DATANODE_USER root

ENV HDFS_NAMENODE_USER root

ENV HDFS_SECONDARYNAMENODE_USER root

ENV HDFS_DATANODE_SECURE_USER hdfs

ENV YARN_RESOURCEMANAGER_USER root

ENV HADOOP_SECURE_DN_USER yarn

ENV YARN_NODEMANAGER_USER root

ENV JAVA_HOME /usr/local/jdk8

ENV CLASSPATH .:$JAVA_HOME/lib

ENV PATH $PATH:$JAVA_HOME/bin

ENV HADOOP_HOME /usr/local/${tar_name}

ENV HADOOP_INSTALL $HADOOP_HOME

ENV HADOOP_MAPRED_HOME $HADOOP_HOME

ENV HADOOP_COMMON_HOME $HADOOP_HOME

ENV HADOOP_HDFS_HOME $HADOOP_HOME

ENV YARN_HOME $HADOOP_HOME

ENV HADOOP_LIBEXEC_DIR $HADOOP_HOME/libexec

ENV HADOOP_COMMON_LIB_NATIVE_DIR $HADOOP_HOME/lib/native

ENV PATH $HADOOP_HOME/sbin:$HADOOP_HOME/bin:$JAVA_HOME/bin:$PATH

ENV CLASSPATH $($HADOOP_HOME/bin/hadoop classpath):$CLASSPATH

# ENTRYPOINT ["/opt/hadoop-master.sh"]

# docker build -t kala/hadoop:2.0 .

- Docker-Compose.yml文件

version: "3.7" services: hadoop-master: build: context: . args: tar_file: hadoop-3.2.1 tar_name: hadoop-3.2.1 image: kala/hadoop:2.0 container_name: kala-hadoop-master-container hostname: hadoopmaster command: - /bin/sh - -c - | /opt/hadoop-master.sh extra_hosts: - "hadoopslave1:172.16.0.16" - "hadoopslave2:172.16.0.17" ports: - "19888:19888" - "18088:18088" - "9870:9870" - "9000:9000" - "8088:8088" volumes: - ../volumes/conf:/usr/local/hadoop-3.2.1/etc/hadoop networks: docker_net: ipv4_address: 172.16.0.15 hadoop-slave1: image: kala/hadoop:2.0 container_name: kala-hadoop-slave1-container hostname: hadoopslave1 command: - /bin/sh - -c - | /opt/hadoop-slave.sh extra_hosts: - "hadoopmaster:172.16.0.15" - "hadoopslave2:172.16.0.17" volumes: - ../volumes/conf:/usr/local/hadoop-3.2.1/etc/hadoop networks: docker_net: ipv4_address: 172.16.0.16 hadoop-slave2: image: kala/hadoop:2.0 container_name: kala-hadoop-slave2-container hostname: hadoopslave2 command: - /bin/sh - -c - | /opt/hadoop-slave.sh extra_hosts: - "hadoopmaster:172.16.0.15" - "hadoopslave1:172.16.0.16" volumes: - ../volumes/conf:/usr/local/hadoop-3.2.1/etc/hadoop networks: docker_net: ipv4_address: 172.16.0.17 networks: docker_net: ipam: driver: default config: - subnet: "172.16.0.0/24" external: name: docker-networks

hadoop配置文件

- hadoop-env.sh

#替换jdk的路径 export JAVA_HOME=/usr/local/jdk8

- yarn-env.sh

#替换jdk的路径 export JAVA_HOME=/usr/local/jdk8

- core-site.xml

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <property> <name>hadoop.tmp.dir</name> <value>/usr/local/hadoop-3.2.1/tmp</value> <description>A base for other temporary directories.</description> </property> <property> <name>fs.defaultFS</name> <value>hdfs://hadoopmaster:18088</value> </property> </configuration>

- hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <property> <name>dfs.replication</name> <value>2</value> </property> <!--hdfs 监听namenode的web的地址,默认就是9870端口,如果不改端口也可以不设置 --> <property> <name>dfs.namenode.http-address</name> <value>hadoopmaster:9870</value> </property> <property> <name>dfs.namenode.name.dir</name> <value>file:/usr/local/hadoop-3.2.1/namenode</value> </property> <property> <name>dfs.datanode.data.dir</name> <value>file:/usr/local/hadoop-3.2.1/datanode</value> </property> </configuration>

- mapred-site.xml

<?xml version="1.0"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <!-- 必须设置,mapreduce程序使用的资源调度平台,默认值是local,若不改就只能单机运行,不会到集群上了 --> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> <property> <name>mapreduce.jobhistory.webapp.address</name> <value>hadoopmaster:19888</value> </property> <property> <name>mapreduce.application.classpath</name> <value>$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/*:$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/lib/*</value> </property> </configuration>

- yarn-site.xml

<?xml version="1.0"?> <configuration> <!-- 必须配置 指定YARN的老大(ResourceManager)在哪一台主机 --> <property> <name>yarn.resourcemanager.hostname</name> <value>hadoopmaster</value> </property> <!-- 必须配置 提供mapreduce程序获取数据的方式 默认为空 --> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <property> <name>yarn.nodemanager.env-whitelist</name> <value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAPRED_HOME</value> </property> </configuration>

- workers

hadoopmaster hadoopslave1 hadoopslave2

集群启动操作

- 启动hadoop docker服务

docker-compose -f Docker-Compose.yml up

- 停止hadoop docker服务

docker-compose -f Docker-Compose.yml down

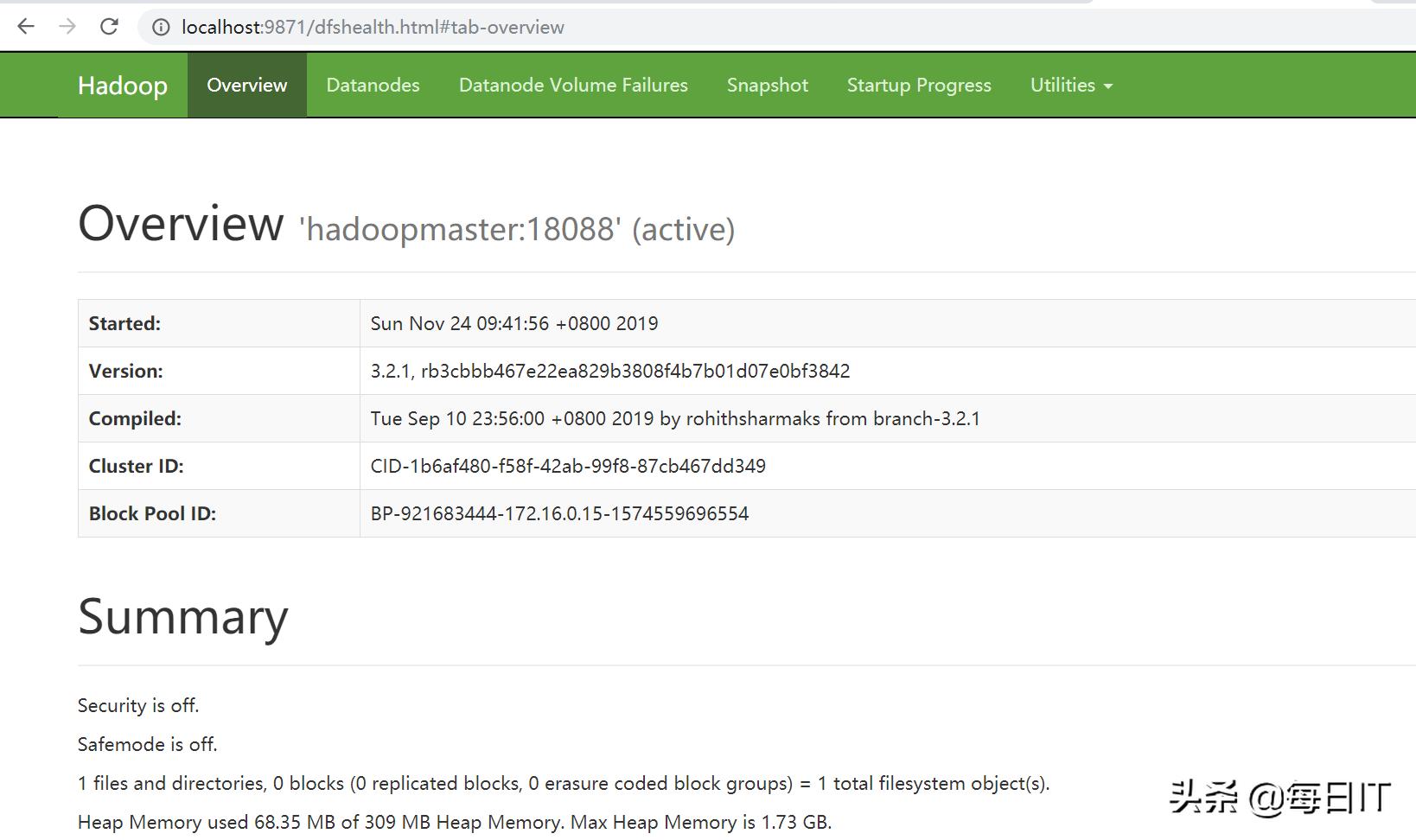

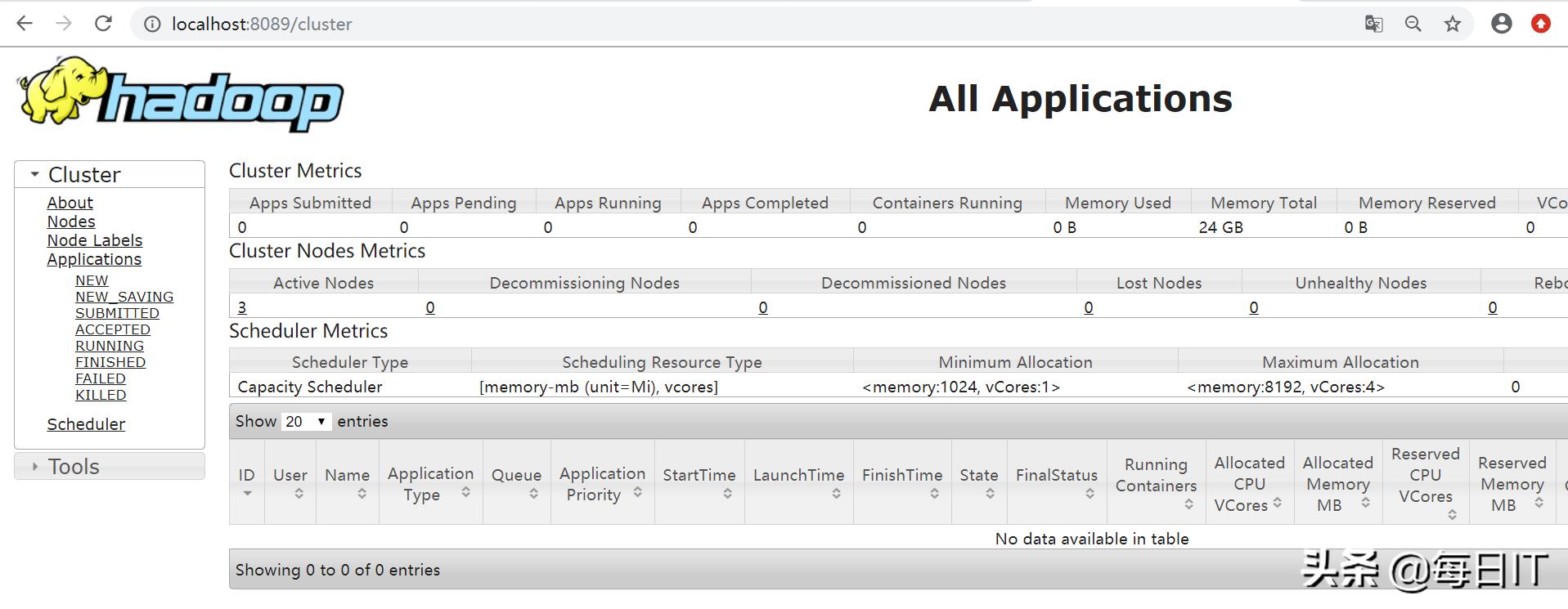

访问hadoop集群

- 访问NameNode

http://nn_host:9870/

- 访问ResourceManager

http://nn_host:8088/

- 访问MapReduce JobHistory Server

http://nn_host:19888/

后续更多技术分享,尽在今日IT,欢迎关注哦~