Rancher 是为使用容器的公司打造的容器管理平台,Rancher 简化了使用 Kubernetes 的流程,开发者可以随处运行 Kubernetes(Run Kubernetes Everywhere),满足 IT 需求规范,赋能 DevOps 团队,Rancher 2.x 已经完全转向了 Kubernetes, 可以部署和管理在任何地方运行的 Kubernetes 集群。

目前ElasticSearch在K8s上有两种部署方式,一种是All-in-on(https://github.com/elastic/cloud-on-k8s) ,一种是通过Helm。

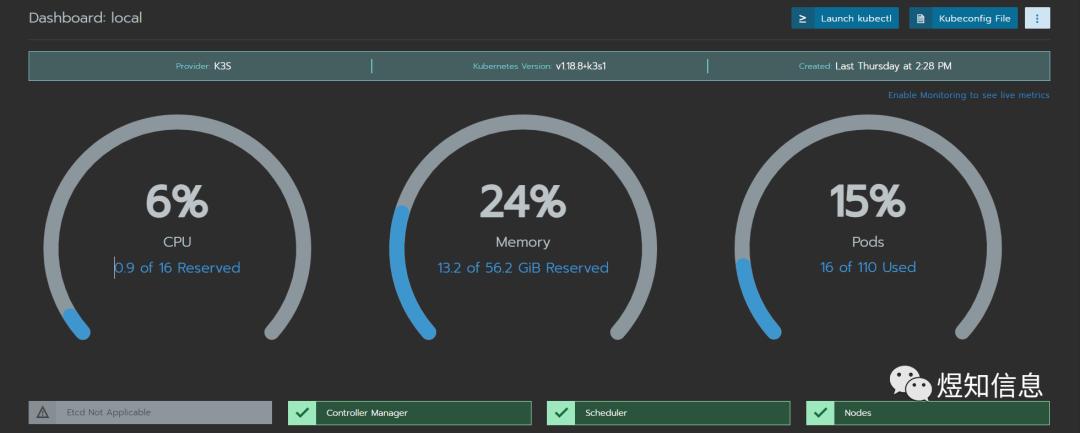

下面描述 Rancher(k3s) On Docker方式下ECK的step-by-step安装过程。这些步骤已经在Mac上和WSL2 on Ubuntu上验证过,希望能帮到一些新手朋友。

本文不是讲述最基础的rancher,k8s,elastic cloud kubernetes概念,需要 基础知识的朋友,请查阅如下链接:

- Rancher: https://rancher.com/

- ECK: https://www.elastic.co/guide/en/cloud-on-k8s/current/index.html

- Kubernetes: https://kubernetes.io/

安装Rancher

参考官方文档安装k3s ,

$ sudo docker run --privileged -d --restart=unless-stopped -p 80:80 -p 443:443 rancher/rancher

这里Docker运行参数" --privileged"一定要加,给容器添加扩展权限。建议大家升级到2.5.5版本,这样可以体验最新版本的Cluster Explorer界面。

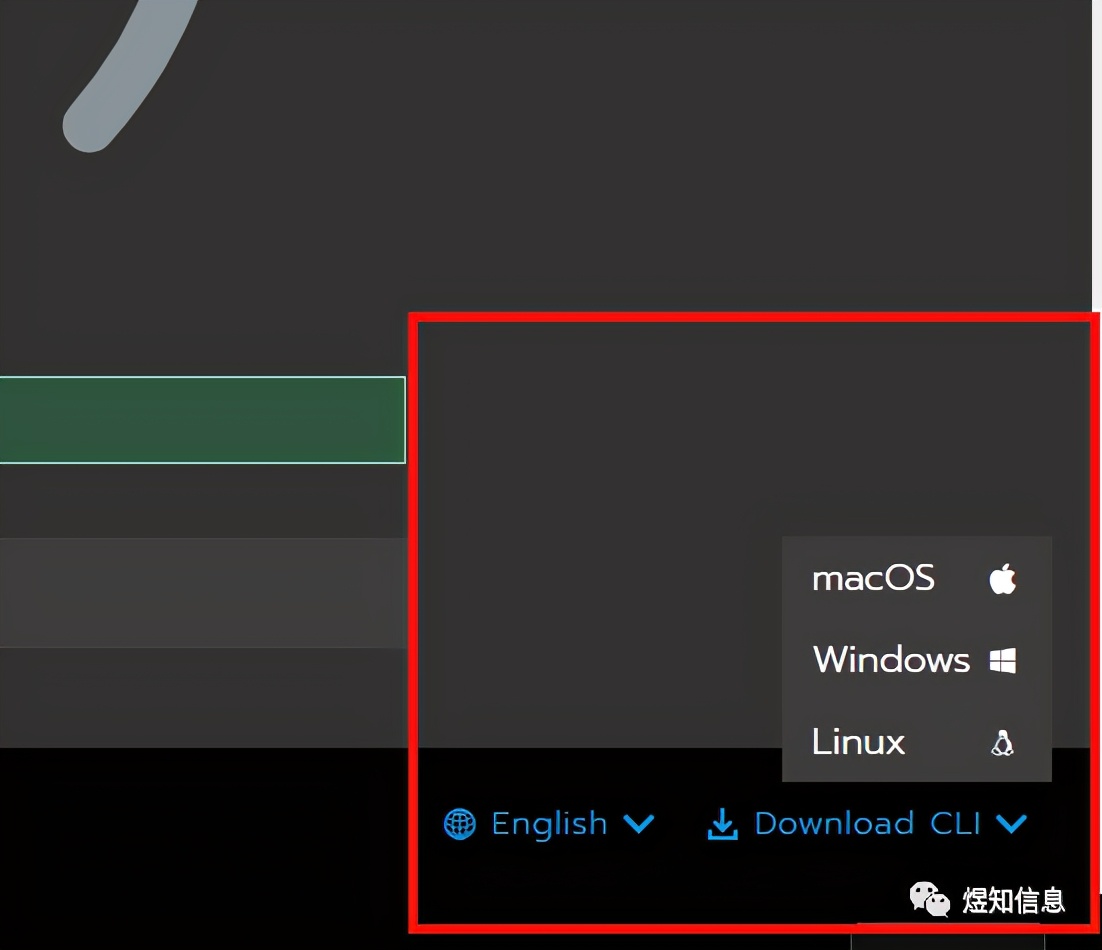

从专业角度来说,之后的一些操作通过Kubectl来执行,推荐使用CLI方式,Web界面操作效率实在太低。

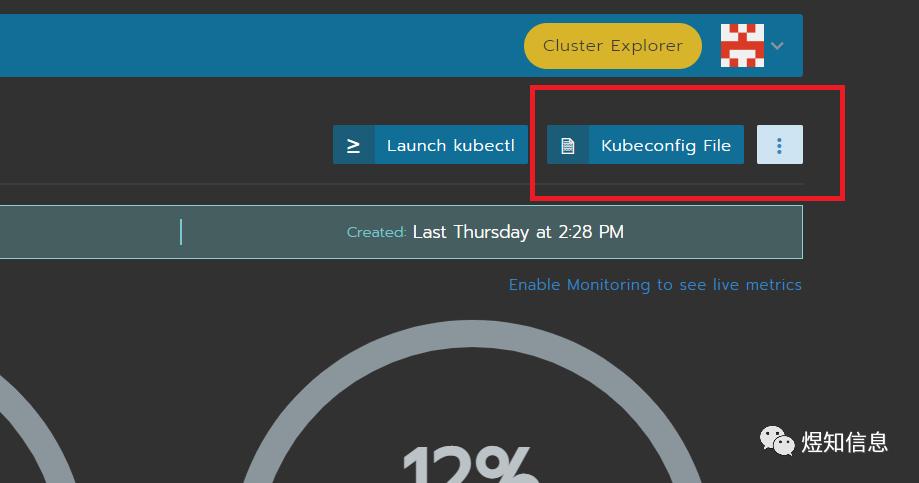

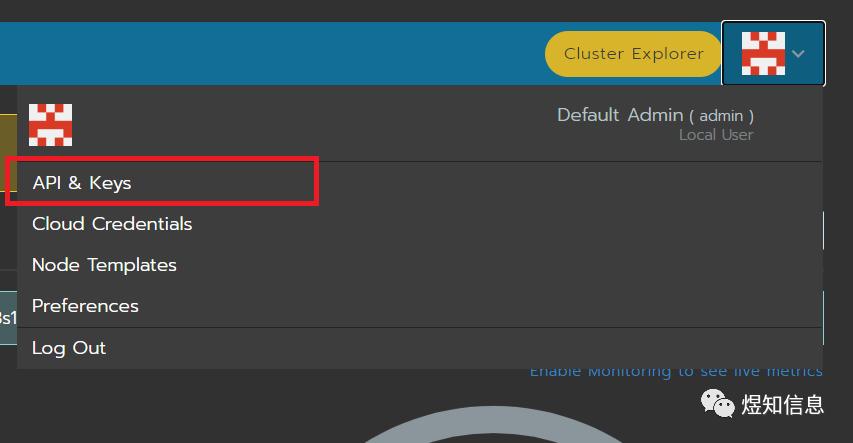

点击管理首页上的链接Kubeconfig file ,拷贝内容到用户主目录下面的配置文件(一般是:~/.kube/config) , 点击用户头像,新建API Key文件:

之后进行登录操作:

$ ./rancher login https://localhost/v3 --token token-vvc4g:jf46m79l2ml49n8qpkq7cdnd8wlfwndjvnwvjds4rcvq5cb8z4gn6b

The authenticity of server 'https://localhost' can't be established.

Cert chain is : [Certificate:

Data:

Version: 3 (0x2)

Serial Number: 3145037638851565576 (0x2ba56b11c4fb1808)

(

为了篇幅,此处删除了输出信息

......

)

Signature Algorithm: ECDSA-SHA256

30:45:02:20:61:9d:15:bc:aa:18:88:9e:54:7b:06:33:62:15:

]

Do you want to continue connecting (yes/no)? yes

INFO[0001] Saving config to /home/billy/.rancher/cli2.json

安装ECK

ECK的安装方式有两种,一种是All-in-One,一种是Helm .

- All-in-One方式

$ kubectlapply-fhttps://download.elastic.co/downloads/eck/1.4.0/all-in-one.yaml

- Helm方式

$ helmrepoaddelastichttps://helm.elastic.co

nbsp;helmrepoupdate

运行默认的安装模式:

$ helminstallelastic-operatorelastic/eck-operator-nelastic-system--create-namespace

安全完之后,按照国内网速,需要过一段时间查看记录

$ kubectl -n elastic-system logs -f statefulset.apps/elastic-operator

安装ES集群节点

这个例子里面我们将创建3个master节点和3个data节点,按照ES官方的文档去安装ES集群节点,会碰到很多PV(Persistent Volumes )和PVC的问题(PersistentVolumeClaim ) ,这是因为Rancher on Docker 并不是真正意义上的k8s集群,是个“假”集群,需要自己手动创建PV 。Rancher推荐的Longhorn也不能安装在这个“假”集群之后,这里推荐使用Local Storage。

- 创建Storage Class

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: local-storage

provisioner: kubernetes.io/no-provisioner

volumeBindingMode: WaitForFirstConsumer

- 创建PV(通过yaml文件创建)

nbsp;kubectlapply-fpvdemo.yaml

pvdemo.yaml文件如下

apiVersion:v1

kind: PersistentVolume

metadata:

name: elasticsearch1

spec:

capacity:

storage: 20Gi

accessModes:

- ReadWriteOnce

hostPath:

path: "/data/elasticsearch1/"

storageClassName: local-storage

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: elasticsearch2

spec:

capacity:

storage: 20Gi

accessModes:

- ReadWriteOnce

hostPath:

path: "/data/elasticsearch2/"

storageClassName: local-storage

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: elasticsearch3

spec:

capacity:

storage: 20Gi

accessModes:

- ReadWriteOnce

hostPath:

path: "/data/elasticsearch3/"

storageClassName: local-storage

---

apiVersion: v1

kind: PersistentVolume

metadata:

name:elasticsearch4

spec:

capacity:

storage: 20Gi

accessModes:

- ReadWriteOnce

hostPath:

path:"/data/elasticsearch4/"

storageClassName: local-storage

---

apiVersion: v1

kind: PersistentVolume

metadata:

name:elasticsearch5

spec:

capacity:

storage: 20Gi

accessModes:

- ReadWriteOnce

hostPath:

path:"/data/elasticsearch5/"

storageClassName: local-storage

---

apiVersion: v1

kind: PersistentVolume

metadata:

name:elasticsearch6

spec:

capacity:

storage: 20Gi

accessModes:

- ReadWriteOnce

hostPath:

path:"/data/elasticsearch6/"

storageClassName: local-storage

创建完了PV之后,就创建ES节点的yaml文件了

nbsp;kubectlapply-fesdemo.yaml

esdemo.yaml文件格式如下

apiVersion: elasticsearch.k8s.elastic.co/v1

kind: Elasticsearch

metadata:

name: es-demo-cluster

spec:

version: 7.11.1

nodeSets:

- name: masters

count: 3

config:

node.roles: ["master"]

volumeClaimTemplates:

- metadata:

name: elasticsearch-data

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 10Gi

storageClassName: local-storage

- name: data

count: 5

config:

node.roles: ["data"]

volumeClaimTemplates:

- metadata:

name: elasticsearch-data

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 10Gi

storageClassName: local-storage

---

apiVersion:kibana.k8s.elastic.co/v1

kind:Kibana

metadata:

name:kibana-demo

spec:

version:7.11.1

count:1

elasticsearchRef:

name:"es-demo-cluster"

namespace:default

经过一段时间等待,就可以看到如下结果了:

$ kubectlgetelasticsearch

NAME HEALTH NODES VERSION PHASE AGE

es-demo-cluster green 6 7.11.1 Ready 2d4h

$ kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

es-demo-cluster-es-data ClusterIP None <none> 9200/TCP 2d4h

es-demo-cluster-es-http ClusterIP 10.43.182.49 <none> 9200/TCP 2d4h

es-demo-cluster-es-masters ClusterIP None <none> 9200/TCP 2d4h

es-demo-cluster-es-transport ClusterIP None <none> 9300/TCP 2d4h

kibana-demo-kb-http ClusterIP 10.43.149.151 <none> 5601/TCP 2d5h

kubernetes ClusterIP 10.43.0.1 <none> 443/TCP 3d

大功告成。

访问ES服务

还有一个最重要的事情就是访问ES集群服务,因为默认ECK里面的ES服务都已经通过k8s证书系统加密,也可以配置成自定义的证书,详细还是参考ECK官方文档,下面就用默认的k8s证书来演示访问ES服务,考虑到是单机演示ES服务,所以我们用port forward到本地的端口:

- 端口转发ES服务

$ kubectl port-forward service/es-demo-cluster-es-http 9200

(*注:如果在集群环境,转发到集群IP,需要加参数)

$ kubectlport-forward--address0.0.0.0service/es-demo-cluster-es-http9200:9200

- 查看ES集群用户及密码(token)

$ kubectl get secret

NAME TYPE DATA AGE

default-kibana-demo-kibana-user Opaque 3 2d4h

default-token-5xs96 kubernetes.io/service-account-token 3 3d

es-demo-cluster-es-data-es-config Opaque 1 2d4h

es-demo-cluster-es-data-es-transport-certs Opaque 7 2d4h

es-demo-cluster-es-elastic-user Opaque 1 2d4h

es-demo-cluster-es-http-ca-internal Opaque 2 2d4h

es-demo-cluster-es-http-certs-internal Opaque 3 2d4h

es-demo-cluster-es-http-certs-public Opaque 2 2d4h

es-demo-cluster-es-internal-users Opaque 2 2d4h

es-demo-cluster-es-masters-es-config Opaque 1 2d4h

es-demo-cluster-es-masters-es-transport-certs Opaque 7 2d4h

es-demo-cluster-es-remote-ca Opaque 1 2d4h

es-demo-cluster-es-transport-ca-internal Opaque 2 2d4h

es-demo-cluster-es-transport-certs-public Opaque 1 2d4h

es-demo-cluster-es-xpack-file-realm Opaque 3 2d4h

kibana-demo-kb-config Opaque 2 2d5h

kibana-demo-kb-es-ca Opaque 2 2d5h

kibana-demo-kb-http-ca-internal Opaque 2 2d5h

kibana-demo-kb-http-certs-internal Opaque 3 2d5h

kibana-demo-kb-http-certs-public Opaque 2 2d5h

kibana-demo-kibana-user Opaque 1 2d5h

列表显示了ES集群的证书信息,我们使用用户“elastic”来访问ES服务

nbsp;kubectlgetsecretes-demo-cluster-es-elastic-user-o=jsonpath='{.data.elastic}'|base64--decode;echo

z0ioASEfZ883Vqq46ci562g9

得到密码(z0ioASEfZ883Vqq46ci562g9)之后,就可以访问ES服务了

nbsp;curlhttps://localhost:9200-uelastic:z0ioASEfZ883Vqq46ci562g9-k

{

"name" : "es-demo-cluster-es-masters-1",

"cluster_name" : "es-demo-cluster",

"cluster_uuid" : "5i3kiPMURgKfa1H4mm9bmg",

"version" : {

"number" : "7.11.1",

"build_flavor" : "default",

"build_type" : "docker",

"build_hash" : "ff17057114c2199c9c1bbecc727003a907c0db7a",

"build_date" : "2021-02-15T13:44:09.394032Z",

"build_snapshot" : false,

"lucene_version" : "8.7.0",

"minimum_wire_compatibility_version" : "6.8.0",

"minimum_index_compatibility_version" : "6.0.0-beta1"

},

"tagline" : "You Know, for Search"

}

同样,转发5601端口,通过web页面访问kibana ,这里就不啰嗦了,记得是https服务(https://localhost:5601)

结束语

ES安装过程中,有一些小坑,但如果对k8s有一定程度了解的话,还是可以轻松跨过去,希望本篇文档对您有一些帮助。